À côté de munin il y a aussi à la radio collectd et graphite, ça fait des années mais l’utilisation n’a jamais vraiment décollé, c’est juste utilisé pour remplir une page avec trois graphes, niveau sonore, nombre d’auditeurs sur le stream, et état du switch studio.

Dans mes archives je trouve une première mention de l’utilisation à la radio fin 2012, c’est dans un fil de discussion qui parle d’ailleurs également de munin, je ne sais plus trop ce qui avait pu me pousser à expérimenter autre chose mais ça semblait à la mode il y a dix ans; je retrouve par exemple un article "An Introduction to Tracking Statistics with Graphite, StatsD, and CollectD", 23 mai 2014. C’est aussi la période d’écriture du site web de la radio (2013) et je me souviens avoir utilisé django-statsd pour visualiser les temps de rendu des différentes vues.

Dans ces années-là, circa 2014, j’avais produit une page récapitulative de la présence à l’antenne (vs la diffusion automatique, d’émissions préenregistrées ou de musiques), ça utilisait l’API de Graphite pour obtenir les données qui arrivaient formatées ainsi :

[

{

"tags": {

"name": "collectd_noc_panik.curl_json-switch.gauge-nonstop-on-air",

"summarize": "1h",

"summarizeFunction": "sum"

},

"target": "summarize(collectd_noc_panik.curl_json-switch.gauge-nonstop-on-air, \"1h\", \"sum\")",

"datapoints": [

[0.0, 1703890800], [0.0, 1703894400], [0.0, 1703898000], ...

]

}

]

c’est-à-dire une série de 0 quand le studio est à l’antenne et 1 quand c’est la diffusion automatique, associés à des timestamps. Tout ça était assemblé pour remplir un tableau de semaines,

Ça a tourné trois ans puis ça a été oublié mais hier j’ai eu envie d’actualiser ça et j’ai retrouvé une version du code a priori jamais utilisée, qui était encore en Python 2 mais améliorait la présentation pour utiliser la palette de couleurs Viridis (que je venais sans doute de découvrir via cette présentation à la SciPy).

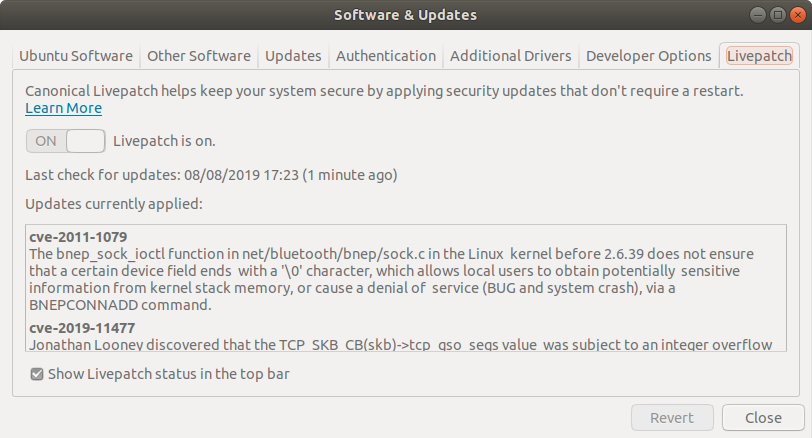

Après une première mise à jour du code pour fonctionner avec Python 3, je me suis dit que ça pouvait être une bonne idée de regarder si ça pouvait être fait via les données stockées dans Prometheus, pour pouvoir supprimer collectd et graphite (pour lesquels, contrairement à munin, j’ai peu d’attachement).

J’ai vite abandonné l’idée de faire faire par Prometheus les regroupements par heure, j’ai plutôt récupéré les échantillons pris toutes les cinq minutes, mais ça faisait trop pour l’API (qui annonce un max de 11000 valeurs possibles dans la réponse, alors qu’il en aurait fallu le quadruple pour couvrir une année), j’ai donc dû multiplier les appels, et par simplicité j’ai juste groupé par semaines,

start = datetime.datetime(year, 1, 1, 0, 0, tzinfo=ZoneInfo('Europe/Brussels'))

start = start - datetime.timedelta(days=start.weekday())

for i in range(53):

end = start + datetime.timedelta(days=7)

# TODO: switch to %:z once available (python 3.12)

start_time = start.strftime('%Y-%m-%dT%H:%M:%S%z').removesuffix('00') + ':00'

end_time = end.strftime('%Y-%m-%dT%H:%M:%S%z').removesuffix('00') + ':00'

resp = requests.get(

'.../prometheus/api/v1/query_range', params={

'query': 'panik_switch_status{info="nonstop-on-air"}',

'start': start_time,

'end': end_time,

'step': '5m'})

L’API de Prometheus attend une heure précisant le fuseau horaire (conforme à la RFC 3339), sous la forme +01:00 mais le formatage des dates dans Python proposait uniquement %z, qui donne +0100, il faut attendre Python 3.12 pour avoir %:z qui fournira +01:00. Ça arrivera avec la prochaine mise à jour Debian mais en attendant, code bricolé moche.

Pas de surprise, les données obtenues ressemblent à ce qu’il y avait via Graphite, les timestamps et les valeurs,

{

"data": {

"result": [

{

"metric": {

"name": "panik_switch_status",

"info": "nonstop-on-air",

"instance": "noc:9100",

"job": "node"

},

"values": [

[1704063600, "0"], [1704063900, "0"], [1704064200, "0"], ...

]

}

],

"resultType": "matrix"

},

"status": "success"

}

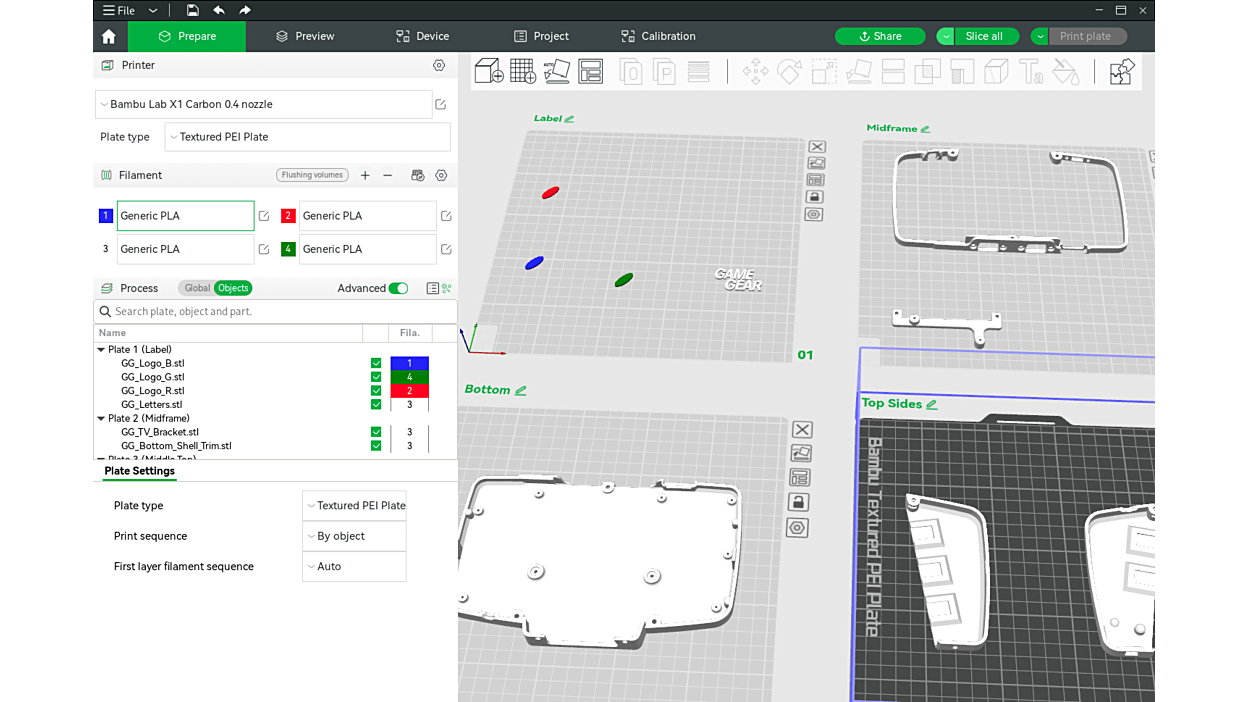

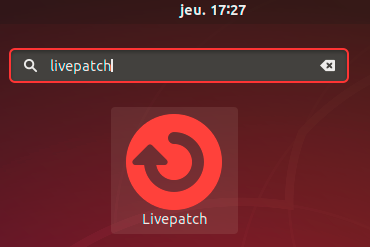

et tadam le résultat,

où on voit l’émission de la soirée/nuit du dimanche, la matinale du lundi et du mardi, une panne le mardi 20 février, etc.

exemple de Debian et Ubuntu

exemple de Debian et Ubuntu